Guide

How to Measure Purchase Intent: 5 Methods Compared

Someone rates your product a 5 out of 5 on a purchase intent survey. Feels great, right? That score means they have roughly a 50% chance of actually buying, according to Ramanujam and Tacke's research in Monetizing Innovation. Half the people who say "definitely would buy" don't follow through. Most founders rely on survey-based intent data without understanding how much it overpredicts real demand.

This guide compares five methods for measuring purchase intent, from traditional Likert scales to AI synthetic panels. Each method gets accuracy data, cost ranges, and a clear recommendation for when it works best. No method is perfect, but some are far more reliable than others.

If you're just getting started, our guide on how to validate a product idea covers 8 methods ranked by cost and speed.

Key Takeaways

- Only 10% of stated purchase intentions convert to actual purchases (Chandon, Morwitz & Reinartz, 2005)

- Five measurement methods compared by cost, speed, and accuracy

- AI synthetic consumer panels achieve 85%+ distributional similarity to human responses

- Behavioral signals outperform self-reported data, but require an existing product

What is purchase intent and why does it matter?

Purchase intent data has become a core budget line for product and marketing teams. 40% of businesses now dedicate more than half their lead generation budget to intent data, according to Shortlister's 2025 industry report. That spending reflects a simple truth: knowing who wants to buy, and how badly, drives every decision from pricing to launch timing.

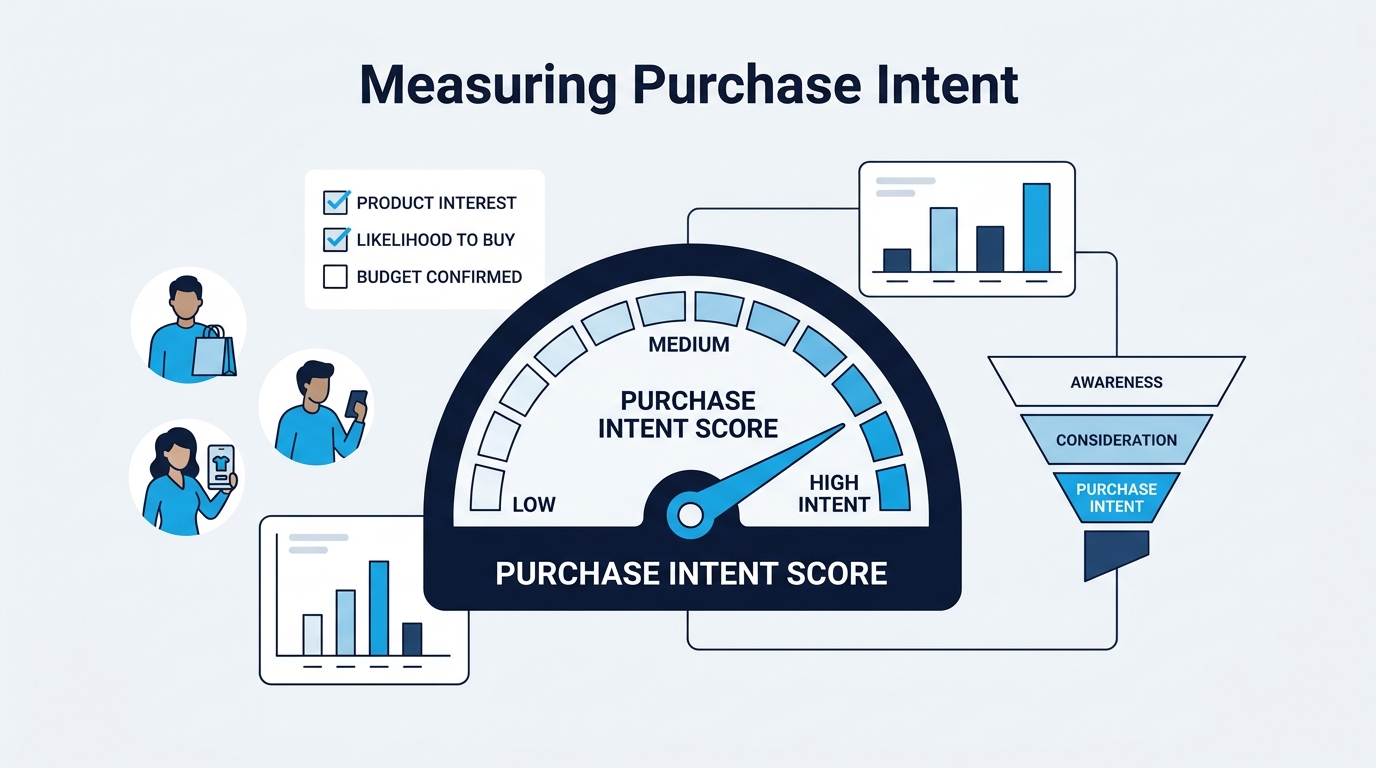

Purchase intent measures how likely a person is to buy a specific product or service within a defined timeframe. It sits between awareness ("I know this exists") and action ("I just bought it"). The gap between those two stages is where most products die quietly.

But why invest so much in measuring something that's notoriously inaccurate? Because even imperfect intent data beats guessing. The question isn't whether to measure purchase intent. It's which method gives you the most reliable signal for the least money and time.

This is one reason why most new products fail - teams confuse interest with demand.

Purchase intent vs purchase behavior

These two concepts get confused constantly. Purchase intent is what people say they'll do. Purchase behavior is what they actually do. The gap between them is real, measurable, and consistent across industries.

Intent data tells you about future demand. Behavioral data tells you about past actions. You need both, but they answer different questions. Intent data helps you forecast and plan. Behavioral data helps you optimize and iterate.

The problem is that most teams treat intent data as if it were behavioral data. They take a survey score at face value and build revenue projections around it. That's how you end up with a product nobody buys.

How do traditional purchase intent surveys work?

Traditional surveys remain the most common method for measuring purchase intent, but their accuracy is worse than most teams realize. Research by Chandon, Morwitz & Reinartz (2005) found that only 10% of consumers who state purchase intent actually follow through with a purchase. That's a 90% false positive rate.

The Likert scale approach

The standard purchase intent survey uses a 5-point Likert scale:

| Score | Label |

|---|---|

| 5 | Definitely would buy |

| 4 | Probably would buy |

| 3 | Might or might not buy |

| 2 | Probably would not buy |

| 1 | Definitely would not buy |

"Top-box scoring" counts only the percentage of respondents who select 4 or 5. A typical consumer product might see 30-40% top-box scores in a well-designed survey. That sounds promising until you apply the conversion correction.

From experience: In practice, we've found that applying a calibration factor of 0.3 to 0.5 on top-box scores gives you a more realistic demand estimate. If 40% of respondents say "definitely would buy," expect 12-20% to actually purchase. Even that range is optimistic for entirely new product categories.

Price sensitivity methods

Two methods go beyond simple "would you buy?" questions. The Van Westendorp Price Sensitivity Meter asks four pricing questions to find the acceptable price range. The Gabor-Granger technique tests purchase intent at specific price points to build a demand curve.

Both methods produce more useful data than a basic Likert scale. They tell you at what price people will buy, not just whether they'd buy at some undefined price. But they still rely on self-reported data, which means they still overpredict actual demand.

Why is purchase intent so hard to measure accurately?

Even the most carefully designed survey overpredicts demand. Ramanujam and Tacke's analysis showed that respondents who give a 5 out of 5 purchase intent rating have only a 50% actual purchase likelihood. At 4 out of 5, that drops to roughly 10-20%. The problem isn't bad survey design. It's human psychology.

The intention-action gap

Three cognitive biases drive the gap between stated intent and actual behavior.

Hypothetical bias is the biggest factor. When there's no real money on the table, people systematically overstate their willingness to pay. Dozens of studies confirm this pattern across product categories, geographies, and demographics.

Social desirability bias pushes responses upward too. People want to seem like good, interesting, forward-thinking consumers. Saying "yes, I'd buy that innovative product" feels better than saying "no, I'll stick with what I have."

Context collapse strips away the real-world friction that prevents purchases. In a survey, there's no competing product on the shelf, no spouse questioning the expense, no three-day delivery wait. The buying context is artificially clean.

The survey fraud crisis

Here's a problem that doesn't get enough attention. Survey quality has collapsed. Survey fraud research suggests that usable survey responses have declined from 75% to roughly 10% over the past decade (PMC, 2024). Bots, professional survey takers, and inattentive respondents have flooded online panels.

The academic research backs this up. A 2024 psychology study found that researchers now discard 38% of collected survey data due to quality concerns. That's more than a third of responses thrown out before analysis even begins.

The hidden problem: Survey fraud doesn't just reduce sample sizes. It introduces systematic bias. Professional survey takers tend to speed through questions and select positive responses. This means your purchase intent scores aren't just noisy - they're biased upward. Combined with the natural hypothetical bias, you're getting doubly inflated numbers. This is why relying on a single survey-based method for purchase intent is increasingly risky.

What does this mean for your product decisions? It means you can't trust a single survey-based measurement anymore. You need either behavioral validation or multiple independent signals pointing in the same direction.

What are digital and behavioral intent signals?

Behavioral data sidesteps the intention-action gap entirely. Instead of asking people what they'd do, you observe what they actually do. Campaigns using intent data achieve 220% higher click-through rates compared to standard targeting, according to Insight Collective's 2025 benchmarks. Real behavior predicts real behavior.

First-party behavioral signals

Your own product and marketing channels generate the most reliable intent signals. These include:

- Landing page engagement - time on page, scroll depth, return visits

- Pricing page visits - the single strongest intent signal on most SaaS sites

- Cart additions without purchase - high intent with a specific friction point

- Feature page interactions - which capabilities drive the most interest

- Email engagement patterns - open rates and click-throughs on product content

The advantage of first-party data is accuracy. Someone visiting your pricing page three times in a week is telling you something no survey can capture. The disadvantage is obvious: you need an existing product or at least a live landing page to collect this data.

Third-party intent data

Platforms like Bombora and G2 aggregate behavioral signals across thousands of websites to identify companies researching specific topics. Sales teams using third-party intent data convert leads 82% faster than teams using demographic targeting alone (Insight Collective, 2025).

Third-party intent works best for B2B products where the buying cycle is long and involves multiple stakeholders. It's less useful for consumer products or pre-launch validation. And it comes with a meaningful price tag, typically $10,000-$50,000 per year for enterprise plans.

Want to see what a purchase intent report looks like? Check out a sample report.

Worth noting: Behavioral signals are the most reliable method for accuracy, but they only work when you have an existing product generating traffic. If you're pre-launch, you need a different approach.

How does AI-powered purchase intent measurement work?

AI synthetic consumer research is the newest method for measuring purchase intent, and early validation data is strong. Maier et al. validated this approach against 9,300 real human responses across 57 surveys (Maier et al., 2025), finding that AI synthetic consumer panels achieve 85%+ distributional similarity to human survey responses. That's close enough to use as a reliable screening tool.

What are synthetic consumer panels?

Synthetic consumer panels use large language models to simulate consumer responses. Each "synthetic consumer" is conditioned on specific demographics - age, income, location, education, interests - and asked to evaluate a product concept. The system collects purchase intent scores, objections, and qualitative feedback from hundreds of these simulated consumers.

The key difference from a chatbot or simple prompt is demographic conditioning. A synthetic consumer representing a 28-year-old urban professional with $75,000 household income responds differently than one representing a 55-year-old suburban retiree. The demographic targeting creates realistic variation in responses.

To understand the methodology in depth, read the science behind synthetic consumer research.

When AI measurement makes sense

AI synthetic panels fill a specific gap in the validation toolkit. They work best when:

- You're pre-launch and have no behavioral data to analyze

- Your budget rules out traditional survey panels ($5,000-$15,000)

- You need results in minutes, not weeks

- You want to screen multiple concepts before investing in deeper research

How we approach this: The will.it.sell platform uses a methodology called Faceted Likert Rating (FLR), which scores purchase intent by evaluating synthetic consumer responses across six calibrated dimensions - buying likelihood, interest, appeal, consideration, perceived value, and choice preference. This approach produces a calibrated score rather than raw survey percentages, which helps account for the hypothetical bias that plagues traditional methods.

The limitation is straightforward: AI panels are a screening tool, not a final verdict. They tell you which ideas are worth investigating further. They don't replace talking to real customers or observing real purchase behavior.

How do these methods compare?

Choosing the right method depends on your stage, budget, and what you're trying to learn. Here's how all five approaches stack up.

| Method | Cost | Speed | Pre-Launch? | Accuracy | Best For |

|---|---|---|---|---|---|

| Likert survey | $5K-$15K | 2-3 weeks | Yes | Low (10% conversion) | Quantitative screening |

| Conjoint analysis | $15K-$50K | 4-6 weeks | Yes | Medium-High | Pricing and feature tradeoffs |

| Behavioral signals | $0 (existing tools) | Ongoing | No | High | Optimizing live products |

| Third-party intent | $10K-$50K/yr | Ongoing | No | Medium-High | B2B sales targeting |

| AI synthetic panel | $0-$249 | Under 5 min | Yes | High (screening) | Fast concept validation |

The bottom line: Research by Chandon, Morwitz & Reinartz (2005) established that only 10% of stated purchase intentions convert to actual purchases. This finding, replicated across multiple product categories, means that traditional survey-based purchase intent scores require a correction factor of 0.1 to 0.2 for realistic demand forecasting.

No single method gives you the complete picture. The most reliable approach combines a fast screening method (AI panels or basic surveys) with behavioral validation once you have a live product. Start cheap and fast. Go deeper on ideas that pass the initial screen.

Frequently asked questions

What is a good purchase intent score?

A 15-20% top-box score (respondents selecting "definitely would buy") is considered strong for most consumer products. But remember the correction factor: even strong top-box scores overpredict by 50% or more (Ramanujam & Tacke). Apply a 0.3-0.5 multiplier to get a realistic conversion estimate.

Can you measure purchase intent before building a product?

Yes. Three methods work pre-launch: traditional purchase intent surveys, conjoint analysis, and AI synthetic consumer panels. Surveys and conjoint require significant budget ($5,000+). AI panels start at $0 and deliver results in minutes, making them the most accessible pre-launch option.

How many survey responses do you need for reliable purchase intent data?

Plan for 200-400 responses per segment you want to analyze separately. But account for data quality issues. With 38% of survey data now discarded for quality concerns, you need to recruit 330-650 respondents to end up with 200-400 usable responses.

Is purchase intent data more accurate than behavioral data?

No. Behavioral data (actual clicks, page visits, cart additions) is consistently more accurate than self-reported intent. But behavioral data requires an existing product generating traffic. Purchase intent data fills the gap when you need demand signals before launch.

What is the difference between purchase intent and purchase probability?

Purchase intent is self-reported: "I would definitely buy this." Purchase probability is a statistically adjusted estimate of actual purchase likelihood. Converting intent to probability requires applying correction factors based on historical conversion data for your product category.

Making purchase intent data work for you

Measuring purchase intent is not optional for product teams spending real money on development. But the method you choose determines whether your data predicts reality or just confirms your hopes.

Here's what to remember:

- Self-reported intent overpredicts by 90% - never take survey scores at face value

- Behavioral signals are the most reliable method - but only work post-launch

- AI synthetic panels fill the pre-launch gap - fast, affordable, and validated

- No single method is enough - combine screening with deeper validation

- Survey quality is declining - account for 38% data discard rates

If you're pre-launch and want to see what purchase intent measurement looks like in practice, check out a sample report. When you're ready to test your own concepts, see pricing.

Stop guessing. Start knowing.

Your first product validation is free. Get your report in minutes.

Test Your Product Idea Free