Methodology

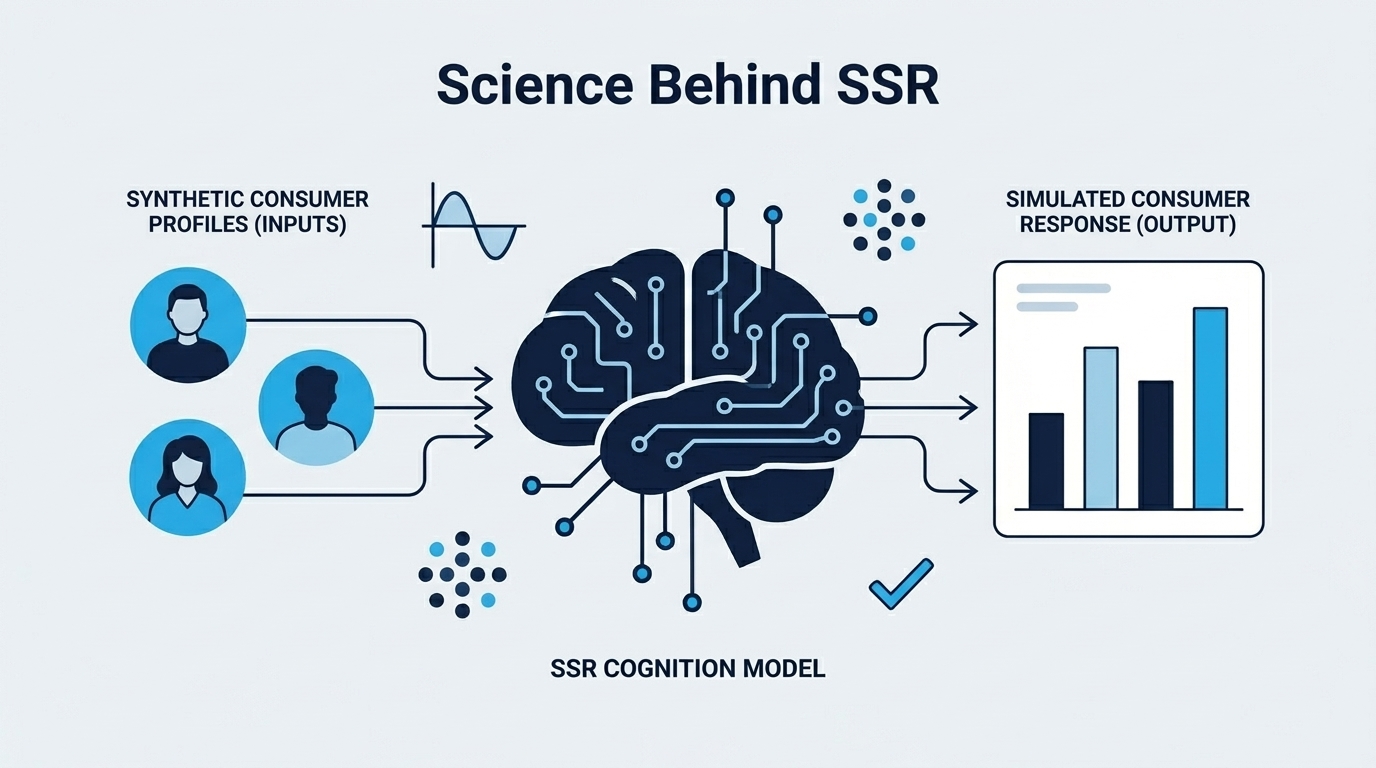

The Science Behind Synthetic Consumer Research

AI synthetic consumers now achieve approximately 90% of human test-retest reliability across 57 surveys and 9,300 real responses (Maier et al., 2025). That number raises an obvious question: how do synthetic consumers actually work, and should you trust them over a real panel?

Most AI survey tools treat language models as simple question-answering machines. You ask "would you buy this?" and get a generic yes or no. That approach produces unreliable, undifferentiated results. Real synthetic consumer research requires a validated methodology with demographic grounding, independent multi-dimensional scoring, and distributional validation against human data.

This guide explains the Faceted Likert Rating (FLR) methodology, its validation against real human responses, and where it falls short. If you're earlier in the process, start with our guide on how to validate a product idea.

Key Takeaways

- FLR methodology validated against 9,300 human responses across 57 surveys (Maier et al. / PyMC Labs, 2025)

- Each response scored across six purchase intent dimensions by an independent analyst

- Persona conditioning, not simple prompting, drives response diversity

- Best used for concept screening, not as a replacement for all research

What is synthetic consumer research?

Synthetic consumer research uses large language models to simulate demographically targeted consumer responses to product concepts. 95% of senior research leaders are already using or planning to adopt synthetic data within 12 months (Qualtrics, 2025). The methodology has moved from academic experiment to industry standard faster than most practitioners expected.

The core idea is straightforward. Instead of recruiting hundreds of human respondents, you condition a language model on specific demographic and psychographic attributes, then collect its responses to your product concept. The result is a panel of synthetic consumers, each with a unique personality shaped by their demographic profile, generating both quantitative scores and qualitative feedback.

But isn't that just asking ChatGPT for an opinion? Not remotely. The difference between reliable synthetic research and unreliable AI guessing comes down to three things: how you build each consumer's personality, how you score their responses, and how you validate the outputs against real human data.

How synthetic consumers differ from simple AI surveys

A simple AI survey asks a language model "would you buy this product?" and records the answer. There's no demographic grounding. No variance. No statistical basis for the response. You get one generic opinion that reflects the model's average training data, not any specific consumer segment.

Synthetic consumer research takes a fundamentally different approach. Each consumer receives a unique psychographic profile grounded in their demographics: age, income bracket, location, education level, interests, and purchasing psychology. A 28-year-old urban professional responds differently than a 55-year-old suburban retiree, because the model encodes different behavioral patterns for each demographic profile.

The result? A panel of 200 synthetic consumers produces realistic spread across the intent scale. Some are enthusiastic. Some are skeptical. Some are actively opposed. That variance mirrors what you'd see from a real human panel.

How does persona conditioning work?

Persona conditioning is the core differentiator between reliable synthetic research and unreliable AI surveys. Columbia University's digital twin approach, which uses similar demographic conditioning, achieved 85% accuracy predicting consumer preferences (HBR, 2025). The technique works because LLMs encode demographic behavioral patterns from their training data.

What goes into a persona profile?

Every synthetic consumer receives a detailed profile before evaluating a product concept. The profile starts with demographics - age, gender, income bracket, location, and education level. On top of that, multiple dimensions of purchasing psychology shape how each consumer thinks about buying decisions. Finally, contextual factors like purchase channel preferences, brand relationships, and category familiarity round out the picture.

Each consumer's personality is written as natural prose, not a checkbox list. One consumer might be an early-adopter who gravitates toward premium products and trusts brand claims. Another might be a deal-hunting skeptic who researches extensively before buying. Both are valid perspectives grounded in their demographic profile.

Why conditioning produces realistic variance

Without demographic conditioning, LLMs default to generic, middle-of-the-road responses. Every answer sounds like it comes from the same moderately interested, moderately skeptical, moderately affluent person. That's useless for product research.

Conditioning changes the distribution of responses. The demographic and psychographic data acts as a behavioral prior, not a script. The model doesn't follow a template. It draws on patterns associated with that profile from its training data, producing authentic variation across the panel.

We discovered early on that keeping the system prompt static while injecting persona details into the user prompt produced more reliable results than combining everything into a single prompt. The static system prompt can be cached across all calls, while each consumer gets their own unique user prompt. This separation matters for both performance and accuracy.

How does Faceted Likert Rating score purchase intent?

Traditional purchase intent surveys use Likert scales, but the numbers they produce are misleading. Research by Chandon, Morwitz, and Reinartz found that only 10% of stated purchase intentions convert to actual purchases. FLR bypasses the limitations of single-scale ratings by using an independent analyst to evaluate free-text responses across six purchase intent dimensions, each anchored by calibrated reference statements.

The three-step scoring process

FLR scoring works in three distinct steps.

Step 1: The consumer reacts. Each synthetic consumer generates a brief gut reaction to the product concept. The system explicitly instructs consumers to respond emotionally rather than analytically, mirroring how real purchase decisions happen. Short, decisive reactions capture purchase intent more accurately than long analytical responses.

Step 2: An independent analyst evaluates the reaction. A separate AI model, one that never saw the consumer's personality or demographics, reads the response and scores it against six purchase intent dimensions:

| Dimension | What it measures | Example reference anchor (5.0) |

|---|---|---|

| Buying | Likelihood of purchasing | "It is very likely I would buy it" |

| Interest | Curiosity or engagement | "I am very interested in this product" |

| Appeal | Attractiveness or desirability | "This really appeals to me" |

| Consideration | Willingness to evaluate purchasing | "I would definitely consider buying this" |

| Value | Worth or cost-benefit judgment | "This is excellent value for me" |

| Choice | Active decision to select this product | "I would choose to buy this" |

Each dimension has five calibrated reference statements anchoring the 1.0-5.0 scale. The analyst uses these as measurement benchmarks, not as a rigid rubric.

Step 3: Adaptive dimension selection. The analyst identifies the 2-4 dimensions most strongly expressed in each response. It doesn't force-fit all six. A response focused on price sensitivity gets scored on value and consideration. A response full of enthusiasm gets scored on interest and appeal. The consumer's final score is the average of their identified dimension scores.

This adaptive approach means every consumer's score reflects what they actually expressed, not what a forced scale imposed on them.

Why multi-dimensional scoring beats direct rating

The positivity bias problem: When you tell an LLM to "rate this product concept from 1 to 5," responses cluster around 3 and 4. This positivity bias is consistent and well-documented. The model wants to be helpful, and helpful tends to mean positive.

We observed this clustering repeatedly during early development. Direct Likert scoring produced distributions with minimal variance and a strong upward skew. Switching to free-text responses with independent multi-dimensional scoring solved the problem entirely.

FLR lets the consumer write naturally about the product. The consumer expresses hesitations, objections, and conditional interest in their own words. The independent analyst then captures all of that nuance, mapping it to calibrated scales across multiple dimensions. The consumer and the scorer never share context, which prevents the scorer from inheriting the consumer's framing.

The result is a more realistic distribution that matches human panel responses. It captures the full spectrum: enthusiasm, indifference, skepticism, and rejection.

What does the validation data show?

The FLR methodology builds on research validated against 9,300 real human responses across 57 surveys (Maier et al. / PyMC Labs, 2025). AI synthetic consumers achieve approximately 90% of human test-retest reliability. This means the distribution of responses, not just the average, closely matches human data.

The 9,300-response validation study

The foundational validation compared synthetic consumer response distributions against real human panel distributions across 57 surveys spanning multiple product categories and demographics. The key metric is distributional similarity, not mean accuracy. Getting the average right is easy. Matching the full distribution, including the tails, is what matters for product decisions.

For context, human panels don't perfectly agree with themselves when retested. Synthetic panels achieve roughly 90% of that already-imperfect human benchmark. That's a high bar.

Our own validation: the cricket cookie test

We've also validated the methodology independently. We ran the system against a published university study where 150 real consumers evaluated chocolate chip cookies containing cricket protein powder (Gao et al., Foods, 2024). This is one of the hardest benchmarks possible: cricket protein triggers visceral disgust that's difficult for AI to simulate.

The synthetic panel matched the human control condition within 0.04 points (3.80 vs 3.84) and exactly matched the nutrition-claims condition (2.71 vs 2.71). More importantly, synthetic consumers responded to product changes the same way real consumers did, with purchase intent dropping when cricket protein was revealed and recovering as positive information was added.

Where did the gap appear? The synthetic panel scored cricket protein conditions about 0.5-0.7 points higher than humans. AI consumers process "cricket protein" as an argument, not a feeling. Real humans have a gut-level disgust response that AI can reason about but can't feel. This tells you exactly where to trust synthetic results and where to validate further.

Where the methodology performs best

FLR shines in specific use cases:

- Concept screening - relative ranking of multiple product concepts against each other

- Directional signals - identifying strong positive or strong negative reactions

- Qualitative theme identification - surfacing common objections, feature requests, and positioning weaknesses

- Well-defined segments - consumer groups with clear demographic profiles

Where the methodology falls short

Why honesty matters here: Most AI research content positions synthetic consumers as a replacement for traditional research. That framing is wrong, and it undermines trust. Knowing the limitations makes synthetic research more useful, not less, because you know exactly when to trust the signal and when to dig deeper.

Here's where FLR doesn't work well:

- Absolute numbers - don't treat a 4.1/5 score as a precise sales prediction. Use scores for relative comparison between concepts.

- Impulse purchases - AI consumers tend to be more analytical than humans when the real decision is driven by impulse. Scores for low-consideration products may understate emotional buying.

- Visceral emotional responses - disgust, excitement, and sensory reactions are muted. Products that depend on taste, texture, smell, or physical interaction can't be fully simulated.

- Novel product categories - products with no precedent in the model's training data produce less reliable responses.

- Niche subcultures - highly specific communities underrepresented in training data get generic treatment.

The honest answer is that FLR tells you which direction to run, not exactly how far you'll get. That directional signal is still the most cost-effective input available for pre-launch product decisions.

How does qualitative feedback synthesis work?

Quantitative scores tell you how much consumers like a product. Qualitative synthesis tells you why. Usable survey responses have declined from 75% to roughly 10% due to professional respondent fraud (PMC, 2024). AI-generated qualitative feedback offers a fraud-free alternative.

Each synthetic consumer generates a free-text response that includes specific reactions, concerns, and reasoning. Across a panel of 200+ responses, common themes emerge naturally. A synthesis model distills these into actionable summaries covering four areas:

- What consumers liked about the concept

- What concerned or confused them

- Suggested improvements and feature requests

- Positioning weaknesses and messaging gaps

The result reads like a condensed focus group report, but without the recruitment headaches, fraud risk, or $15,000 price tag. Want to see what this looks like in practice? Check out a sample report.

How does FLR compare to other research methods?

Not all AI research tools use the same methodology. Traditional research methods like focus groups cost $5,000-$15,000 per study, while synthetic consumer research delivers comparable directional insights at a fraction of the cost. But the accuracy and approach vary widely across tools. Picking the wrong one gives you false confidence.

| Approach | Method | Accuracy Signal | Best For |

|---|---|---|---|

| Simple LLM survey | Ask LLM to rate 1-5 | Low (positivity bias) | Quick, unreliable gut-check |

| Persona-conditioned survey | Conditioned LLM + Likert | Medium (forced scale limits) | Better than simple, still limited |

| FLR (faceted scoring) | Conditioned LLM + independent multi-dimensional scoring | High (90% of human test-retest) | Concept screening with quantitative rigor |

| Digital twins (Columbia) | Fine-tuned on individual data | High (85% accuracy, HBR, 2025) | Enterprise with existing customer data |

| Traditional survey panel | Human respondents + Likert | Variable (10% stated intent converts) | Statistical rigor when panel quality is high |

| Focus groups | Moderated small-group discussion | Medium-High (qualitative depth) | Exploring emotional reactions, but expensive and slow |

The gap between the top and bottom of this table is enormous. A simple LLM survey gives you one data point with no demographic grounding. FLR gives you a statistically validated distribution across targeted consumer segments. They aren't even the same category of tool.

How does FLR differ from "just asking ChatGPT?" Three things. First, each consumer has a unique personality shaped by their demographic profile, not a generic prompt. Second, an independent analyst scores responses against calibrated reference statements across six dimensions, removing the positivity bias of self-rating. Third, the methodology is validated against published human research, not assumed to be accurate.

The digital twins market, which covers much of this territory, is projected to grow from $24.48 billion to $384.79 billion by 2034 (Fortune Business Insights, 2024). That growth reflects how seriously organizations are taking synthetic data approaches for consumer research.

Frequently asked questions

Is synthetic consumer research a replacement for traditional surveys?

No. Synthetic consumer research is a screening tool, not a replacement. It excels at relative ranking and directional signals. AI panels achieve approximately 90% of human test-retest reliability (Maier et al. / PyMC Labs, 2025), but critical launch decisions with significant financial exposure still benefit from human validation.

How many synthetic consumers do you need for reliable results?

Research suggests 100-300 synthetic consumers per segment produce stable distributions. More respondents reduce variance, but returns diminish past 300. Unlike traditional panels, where researchers discard 38% of data for quality issues (Frontiers in Psychology, 2024), synthetic responses don't suffer from fraud or inattentiveness.

Can synthetic consumers evaluate new product categories?

With caveats. LLMs perform best for product categories well-represented in their training data. Novel categories with no precedent may produce unreliable responses. The practical workaround is to test against known products first to calibrate expectations before testing novel concepts.

What makes FLR different from asking ChatGPT about a product idea?

Three things separate them. Persona conditioning provides demographic grounding instead of generic responses. Independent multi-dimensional scoring evaluates responses across six purchase intent dimensions rather than producing a single biased rating. Validation against human data confirms approximately 90% of human test-retest reliability. Asking ChatGPT directly gives you one generic opinion with no variance and no statistical basis.

For more on practical measurement approaches, read our guide on how to measure purchase intent.

Making synthetic consumer research work for you

The FLR methodology isn't a black box. It's a documented, validated approach with clear strengths and honest limitations.

The methodology has been validated against 9,300 human responses across 57 surveys - not theoretical projections, but real distributional comparisons. Persona conditioning produces realistic variance where simple prompting does not. Independent multi-dimensional scoring avoids the positivity bias that plagues direct Likert ratings by evaluating across six calibrated dimensions. The approach works best for concept screening and directional signals, not absolute sales predictions, and the limitations are documented transparently throughout this guide.

What makes FLR work is the combination of three things that no single approach offered before: demographic conditioning for realistic variance, independent multi-dimensional scoring for unbiased measurement, and distributional validation for statistical credibility. will.it.sell implements this methodology so product teams can run validated concept screening in minutes rather than weeks.

If you want to see the methodology in action, check out a sample report. When you're ready to test your own product concepts, see pricing.

Stop guessing. Start knowing.

Your first product validation is free. Get your report in minutes.

Test Your Product Idea Free