Methodology

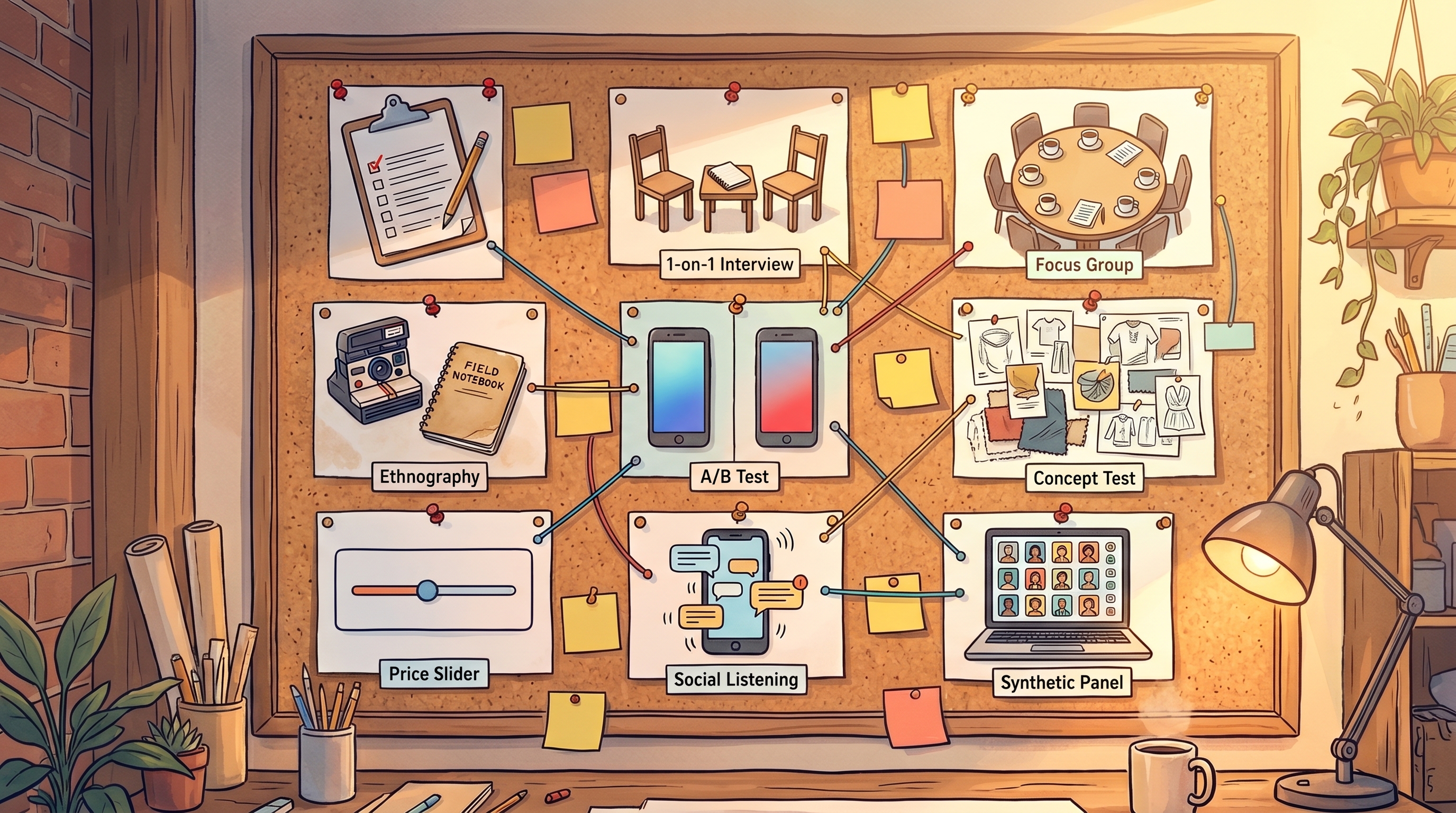

Market research methods in 2026: the decision matrix

Search volume for "market research methods" jumped 233% quarter over quarter. You'd expect the top-ranking results to reflect how people actually do research in 2026. They don't. The same five-to-eight-method list has been recycled since before synthetic consumer panels, LLM-assisted coding, and scaled social listening became standard tools. Most top pages still read like 2018.

That gap matters because the decision you're making isn't "what is market research." It's "which method fits my stage, my budget, my timeline, and the specific question I need answered." The old lists skip the chooser, skip the costs, and skip the newest methods entirely. This page is the 2026 version: nine methods, grouped by type, with concrete cost and sample-size numbers and a decision matrix you can screenshot.

Quick disclosure before anything else. I'm building will.it.sell, a pre-revenue B2C synthetic consumer research tool, so I have a point of view on the newest methods in the list. The older methods are documented from published cost benchmarks and practitioner sources, not a consulting practice I don't run. Read the validation procedure that orders these methods.

Key Takeaways

- Nine market research methods matter in 2026: surveys, in-depth interviews, focus groups, ethnography, A/B testing, concept tests, conjoint analysis, social listening, and synthetic consumer panels.

- Costs span roughly $5 per synthetic-panel run to $100,000+ for an ethnography (Drive Research, 2026).

- Speed spans minutes (synthetic panels, DIY A/B) to 16 weeks (full ethnography or conjoint).

- The right choice depends on stage (pre-idea, pre-launch, post-launch) and question (prevalence, reasons, willingness-to-pay, behavior).

- The decision matrix at the end maps each method to the situation it fits.

What is a market research method?

A market research method is a structured way to gather or analyze information about customers, competitors, or a market so you can make a decision. Methods differ along four axes: qualitative versus quantitative output, primary versus secondary data, behavior versus stated preference, and real-panel versus synthetic-panel respondents.

Four axes of difference

- Qualitative vs quantitative. Themes and language versus numbers and prevalence.

- Primary vs secondary. You collect the data yourself, or you analyze someone else's collection.

- Behavior vs stated preference. What people did versus what they say they'd do.

- Real panel vs synthetic. Recruited human respondents versus LLM-simulated personas.

Why the old taxonomy needs updating

Pre-2024 lists treat research as "survey somebody and analyze the answers." That worked when the method set was narrow. It doesn't in 2026. Behavioral sources like product analytics and social listening sit between qualitative and quantitative. Synthetic consumer panels don't fit either classic branch at all. If your taxonomy still ends at "surveys and focus groups," you're planning research with a 2018 map.

A market research method is a structured data-gathering approach for customer and market decisions. The nine methods used in 2026 differ along four axes: qualitative versus quantitative output, primary versus secondary sourcing, behavior versus stated preference, and real versus synthetic respondents. The axes decide which method fits which question.

Qualitative vs quantitative market research

Qualitative methods surface the reasons behind a behavior in small samples. Quantitative methods measure how common a behavior is in large ones. Most real projects need both. The mistake is picking one and forcing it to answer a question the other was designed for.

Qualitative methods

In-depth interviews, focus groups, ethnography, and observational studies. Their strength is the "why," the unprompted language, and the hypothesis generation. Their limit is that they can't tell you prevalence, and transcripts coded by humans are slow and costly.

Quantitative methods

Surveys, A/B testing, concept tests, and conjoint analysis. Their strength is statistical reliability, prevalence estimates, and effect sizes. Their limit is they answer only the questions you asked, and stated intent overstates actual behavior.

Hybrid methods (new in 2026)

Social listening and synthetic consumer panels sit between the two camps. They return both textual responses and structured metrics at scale. Synthetic panels in particular turn what used to be a small-sample qualitative method (deep persona conversation) into something you can run at survey scale, in minutes, for a fraction of the cost. That's the shift the old taxonomy missed.

Surveys: the default quantitative workhorse

A survey asks a defined set of questions to a sample of respondents and reports the frequencies. It's the default quantitative method because it's cheap, fast, and scales. It's also the most abused method in the list. Most "survey results" you've ever seen are biased by sample, question wording, or stated-intent inflation.

When to use

Measuring brand awareness, preference, stated intent, or satisfaction across a large sample. Comparing two audiences on the same metric. Tracking a metric over time.

Cost and speed

Online surveys run $5,000-$15,000+ for 400 responses including panel, design, and analysis (Drive Research, 2026). Phone surveys push that to $15,000-$30,000+ for the same sample. Timeline runs 1-3 weeks end to end online. B2B or specialized audiences cost more per respondent because recruiting is harder.

Sample size

Four hundred responses for general-population estimates at 95% confidence. One hundred to 150 per subgroup if you plan to cut the data.

Watch-outs

Stated purchase intent inflates actual buying. Chandon, Morwitz, and Reinartz documented in the Journal of Marketing that the act of asking about intent itself changes later purchase rates, a phenomenon called self-generated validity (Chandon et al., 2005). Top-box "definitely would buy" responses routinely convert at 40-60% of the stated rate in concept tests. Don't take the raw score at face value. See how to correct for stated-intent overstatement.

In-depth interviews: the qualitative baseline

An in-depth interview is a 30-60 minute one-on-one conversation with a target-audience respondent, recorded and then coded for themes. It's how you find out the questions your survey should have asked. Five users surface roughly 85% of qualitative signal in a given segment (Nielsen Norman Group, 2000). That number hasn't moved in 25 years because it's a property of human language, not technology.

When to use

Before writing a survey, to calibrate the question set. After a quantitative result, to explain a surprising number. Whenever you need unprompted customer language for copy, positioning, or features.

Cost and speed

U.S. B2C interviews run about $500 all-in (Center for Strategy Research, 2025). Participant incentive adds $75-$150 (Drive Research, 2026). Five to ten interviews take 1-2 weeks with focused recruiting.

Sample size

Minimum five, standard ten, serious commitment twenty.

Watch-outs

Ask about past behavior, not hypothetical future intent. "Would you pay for this?" is one of the worst questions you can ask in an interview. "What did you do the last time you had this problem?" is one of the best. Recruiting from friends, Twitter followers, or existing customers skews the sample in ways you'll find out only after shipping. Define your target audience before you pick up the phone.

Focus groups: the method that keeps getting questioned

A focus group is a moderated 60-90 minute discussion with 6-10 target-audience participants, designed to surface group dynamics and shared reactions to stimulus. It's the second most visible qualitative method in practice, even though usability researchers have argued for decades that individual interviews produce cleaner signals.

When to use

Stimulus response to packaging, ads, concepts, or prototypes. Observing language and agreement patterns between peers. Stakeholder settings where seeing the audience react in person matters politically.

Cost and speed

A standard focus group project runs $10,000-$30,000 (Drive Research, 2026). Online formats reduce cost by eliminating facility fees and participant travel. Multi-market projects (4-6 groups across 2-3 cities) stack well above that range. Participant incentives typically run $75-$150 for B2C and $500+ for B2B. Timeline is typically 3-6 weeks end to end.

Sample size

Six to ten per group. A standard project fields 24-60 total participants across groups.

Watch-outs

Dominant-voice bias is the main failure mode: one confident opinion shapes the whole room and the recording. Confirmation risk runs close behind. Sponsors watching through the one-way mirror tend to interpret ambiguous quotes as supportive, because that's what they came to hear. Nielsen's old critique, that interviews produce better UX signal than groups, applies here too.

Ethnography and observational studies

Ethnography observes target customers in their natural environment (at home, at work, in the store) over hours or days, without prompting them. It's the most expensive primary method in the list and the one that surfaces behaviors respondents would never mention in an interview.

When to use

Deeply contextual products (health, home, in-store behavior). When stated behavior contradicts what you suspect people actually do. Cultural or cross-market context where stated-preference methods bias toward the researcher's cultural frame.

Cost and speed

Full ethnographic studies run $30,000-$100,000+ (Drive Research, 2026). Timelines run 8-16 weeks including planning, fieldwork, and analysis.

Sample size

Eight to twenty households or participants, depending on depth.

Digital ethnography variant

Mobile diary studies, screen recordings, and shop-along videos reduce the cost and compress the timeline while keeping most of the behavioral signal. Digital ethnography is how this method survives in 2026 budgets.

A/B testing: the behavioral benchmark

A/B testing measures actual behavior by randomly assigning users to two or more variants and comparing conversion rates. It's the only method in the list that measures behavior rather than stated preference, which makes it the benchmark everything else calibrates against. It also only works after you have traffic.

When to use

Optimizing a live product, landing page, pricing page, or email. Comparing two feature configurations. Validating a concept at the purchase moment, not the survey moment.

Cost and speed

Tool cost runs $0-$3,000 per month (Statsig, VWO, Optimizely tiers). Sample size drives timeline far more than cost does. Detecting a 5-10% lift at 80% power and 95% confidence typically needs 5,000-20,000 users per variant (Evan Miller sample-size calculator, industry-standard reference).

Sample size

Run a sample-size calculator before you launch. Don't eyeball it. Eighty percent power and 95% confidence are the industry defaults.

Watch-outs

Novelty effects inflate lift in the first week; results often regress afterward. Peeking at the numbers before the sample is complete inflates false positives. And the obvious one: you can't A/B test a product you haven't built yet.

Concept tests: monadic and sequential

A concept test shows respondents a proposed product, ad, or feature and measures reaction metrics like purchase intent, uniqueness, and believability. Monadic tests show one concept per respondent. Sequential monadic tests show several. Monadic is more reliable but costs more per concept. Sequential is cheaper but contaminates judgement across concepts.

When to use

Before committing to a product or campaign direction. Comparing 2-5 concept variants. Benchmarking against Nielsen BASES or category norms if your industry has them.

Cost and speed

DIY concept surveys run $2,000-$5,000. Full Nielsen BASES or equivalent runs $25,000-$75,000. Timelines run 1-4 weeks depending on vendor and sample.

Sample size

Monadic designs want 150-200 per concept minimum. Sequential monadic typically runs 100-150 total respondents, each seeing 3-5 concepts.

Watch-outs

Top-box intent inflation is the same problem that haunts all surveys: "definitely would buy" overstates actual purchase. Sequential designs bias later concepts against earlier ones unless the order is randomized properly. And "uniqueness" scored by stated preference rarely predicts market uniqueness.

Conjoint analysis: pricing and feature tradeoffs

Conjoint analysis presents respondents with forced-choice tradeoffs between products defined by several attributes (price, features, brand) and statistically decomposes which attributes drive preference. It's how you learn what each feature is worth and at what price the product stops being bought.

When to use

Pricing decisions that need willingness-to-pay decomposed by feature. Feature prioritization when you have 4-10 candidate features. Bundle design and product-line architecture.

Cost and speed

DIY platforms run from roughly $900 per year (OpinionX) or $4,500 per year (Sawtooth Discover). Full-service projects run $25,000-$100,000+ (Drive Research, 2026). Timelines run 1-3 weeks DIY, 8-14 weeks full-service.

Sample size

Two hundred respondents minimum for choice-based conjoint. Four hundred or more if you plan subgroup analysis.

Watch-outs

Too many attributes trigger survey fatigue and respondents satisfice. Hypothetical tradeoffs are still hypothetical. Conjoint predicts relative preference well but absolute uptake estimates need calibration against real purchase data.

Social listening: the always-on behavioral signal

Social listening uses tools like Sprinklr, Brandwatch, Sprout Social, Meltwater, and Talkwalker to monitor and analyze public social-media and review content at scale. It's the largest always-on category in the list. The social media listening market reached $12.15 billion in 2026 (Research and Markets, 2026).

When to use

Brand and campaign tracking. Competitive monitoring. Trend spotting and crisis detection. Mining unprompted customer language for copy.

Cost and speed

DIY tier runs $100-$500 per month. Enterprise tier runs $1,500-$15,000 per month. The system is always-on once configured, with first usable reports landing in days.

Sample size

Driven by conversation volume, not recruitment. This is the only method in the list where sample size isn't a planning variable.

Watch-outs

Public social skews young, opinionated, and non-representative. Sentiment classifiers still misread sarcasm and domain-specific jargon, especially in health, finance, and regulated categories.

Synthetic consumer panels: the 2026 addition

A synthetic consumer panel uses LLM-simulated personas built from demographic and psychographic parameters to produce purchase-intent reactions to a product or concept. It's the newest method on the list and the one missing from every decade-old "methods" article on page one of Google. The category was formally established in March 2026, when Qualtrics launched synthetic panels at its X4 Summit (SiliconANGLE, Mar 2026) and quantilope launched Category Twins (PR Newswire, Mar 2026).

When to use

Pre-launch, pre-panel exploration when you don't yet know what to survey real people about. High-variant concept screening where 40+ variants make recruited panels impractical. Early-stage price and positioning exploration. Continuous testing cycles where the cost of a recruited panel makes iteration prohibitive.

Cost and speed

Runs cost $5-$100 depending on size and vendor. DIY tools (including will.it.sell) sit at the low end. Timeline runs minutes to hours, not weeks.

Sample size

One hundred to five hundred simulated respondents per run is typical. Sample size is cheap. The real constraint is which decision you're trying to inform, not how many runs you can afford.

When not to use

Last-mile validation where a real purchase commitment will follow. Safety-critical or regulated claims testing. Niche B2B categories where training data is sparse and the model hasn't seen the buyer type.

Watch-outs

LLM-generated responses compress variance compared to human panels. Interpret distributions, not individual scores. Benchmark against a known product before you trust a new one. For the methodology underneath, see the science behind synthetic consumer research.

The 2026 market research methods decision matrix

This is the one-screen comparison. Every method in the guide, cross-cut on type, cost, speed, sample size, fit, and the thing that will hurt you if you ignore it.

| Method | Type | Typical cost | Speed | Sample size | Best for | Watch out for |

|---|---|---|---|---|---|---|

| Survey | Quant | $5k-$15k+ for 400 online | 1-3 wks | 400 general / 100-150 per subgroup | Prevalence, tracking, stated intent | Stated-intent inflation |

| In-depth interview | Qual | ~$500 each all-in | 1-2 wks | 5-20 | "Why" behind the number, unprompted language | Recruiting bias, hypothetical questions |

| Focus group | Qual | $10k-$30k project | 3-6 wks | 24-60 across groups | Stimulus reaction, group dynamics | Dominant-voice bias |

| Ethnography | Qual | $30k-$100k+ | 8-16 wks | 8-20 | Contextual/behavioral truth, cross-market | Budget, time |

| A/B test | Quant (behavioral) | Tool $0-$3k/mo | Sample-driven | 5k-20k per variant for 5-10% lift | Actual behavior at purchase moment | Needs live traffic; novelty effects |

| Concept test | Quant | DIY $2k-$5k; full $25k-$75k | 1-4 wks | 150-200 monadic; 100-150 sequential | Pre-launch variant comparison | Top-box intent inflation |

| Conjoint analysis | Quant | DIY from $900/yr; full $25k-$100k | 1-3 wks DIY; 8-14 wks full | 200 min; 400+ subgroups | Price/feature tradeoffs | Survey fatigue; hypothetical |

| Social listening | Hybrid (passive) | $100-$15k/mo | Always-on | Volume-driven | Brand tracking, trends, language | Non-representative sample |

| Synthetic consumer panel | Hybrid (simulated) | $5-$100 per run | Minutes-hours | 100-500 simulated | Pre-panel exploration, high-variant screening, iteration | Variance compression; needs calibration |

The 2026 market research methods decision matrix compares nine methods across cost ($5 per synthetic run to $100,000+ per ethnography), speed (minutes to 16 weeks), sample size (5 to 20,000), and fit. Surveys remain the default quantitative workhorse. Synthetic consumer panels are the newest entry and the only method priced at iteration-friendly speed. Everything else in the list sits somewhere between those two poles.

How to pick a method for your stage

Match the method to the stage and the question, not to what your team has used before. Two quick rules do most of the work.

The stage-based rule

- Pre-idea: synthetic consumer panels plus social listening. Cheap, fast, surfaces language you wouldn't have guessed.

- Pre-panel exploration: in-depth interviews plus synthetic panels.

- Pre-launch: concept tests, conjoint for pricing, survey at scale.

- Post-launch: A/B testing, social listening, satisfaction surveys.

The question-based rule

- "Would people buy this?" A/B test if you can. Synthetic panel plus concept test if you can't.

- "Why are they doing that?" Interviews or ethnography.

- "How much would they pay?" Conjoint.

- "What do they already say about this category?" Social listening.

- "How common is this behavior?" Survey.

See the validation procedure that orders these steps.

What changed in market research between 2016 and 2026

Three shifts reshaped how research actually gets done in the last decade. None of them are in the old listicles.

Shift 1: synthetic panels became a real category

In March 2026, Qualtrics launched synthetic research at X4 Summit and quantilope launched Category Twins. HBR and MIT Sloan both ran features on AI in consumer research the following month. The category finally has a name, a vendor set, and cost benchmarks.

Shift 2: LLM-assisted qualitative coding

Interview transcripts that used to take days of human coding can now be first-passed in hours. The method is the same. Cost and speed changed by an order of magnitude.

Shift 3: behavioral data sources normalized

Social listening matured into a $12.15 billion market in 2026 (Research and Markets, 2026). Product-analytics data became a standard primary-research source alongside surveys. Behavior is no longer an afterthought; it's a first-class data type.

Research methods changed in three ways between 2016 and 2026: synthetic consumer panels became a named category (Qualtrics and quantilope launches, March 2026), LLM-assisted qualitative coding compressed interview analysis time, and social listening grew into a $12.15 billion market. Old lists treat this as a stable taxonomy. It isn't. The category expanded in exactly the dimension where the old lists are weakest: low-cost, fast-iteration methods that fit pre-panel work.

Frequently asked questions

What are the main market research methods?

Nine methods cover what you need in 2026: surveys, in-depth interviews, focus groups, ethnography and observational studies, A/B testing, concept tests, conjoint analysis, social listening, and synthetic consumer panels. Older lists stop at the first four or five. The newer methods (social listening, synthetic panels) address speed and cost constraints the classical methods simply don't.

What is the difference between qualitative and quantitative market research?

Qualitative methods (interviews, focus groups, ethnography) surface reasons and unprompted language in small samples of 5 to 60 people. Quantitative methods (surveys, concept tests, conjoint analysis) measure prevalence and effect sizes in samples of 150 to 400+ per subgroup. Social listening and synthetic consumer panels are hybrid: they return both textual and structured data at scale.

How much do market research methods cost?

Costs range from a few dollars to $100,000+ per study. Online surveys run $5,000-$15,000+ for 400 responses; focus-group projects run $10,000-$30,000; ethnographies run $30,000-$100,000+ (Drive Research, 2026). Synthetic consumer panel runs cost $5-$100 each. Conjoint DIY platforms start around $900 per year; full-service projects reach $100,000. Budget is rarely the hard constraint. Picking the wrong method is.

Which market research method is best for a new product?

No single method is best; the stack depends on your stage. Pre-idea: synthetic panels plus social listening. Pre-launch: in-depth interviews, a concept test, and a conjoint on price. Post-launch: A/B testing and tracking surveys. The decision matrix above maps each method to the situation it fits.

Are synthetic consumer panels a real market research method?

Yes. The category was formally named in March 2026, when Qualtrics launched synthetic research at X4 and quantilope launched Category Twins (SiliconANGLE; PR Newswire). The method fits pre-panel exploration, high-variant concept screening, and iteration cycles. It doesn't replace last-mile validation with recruited real consumers. It replaces the guesswork before that validation.

How long does each market research method take?

Synthetic consumer panels run in minutes to hours. Surveys and DIY conjoint run in 1-3 weeks. Interviews and focus-group projects run in 1-6 weeks. Ethnographies and full-service conjoint projects run in 8-16 weeks (Drive Research, 2026). A/B tests are driven by required sample size, not calendar time; small effects need large samples.

Can you replace traditional market research with AI?

Not fully. Synthetic consumer panels and LLM-assisted coding compress cost and speed for pre-panel work and qualitative analysis. Last-mile validation, the decision to ship or kill a product, still benefits from recruited real consumers, A/B-tested behavior, or a hybrid of both. The 2026 playbook uses AI upstream and real panels downstream, not one instead of the other. See the evidence from validation studies.

The bottom line

Nine methods. Four axes. One decision matrix. That's the 2026 version of the list most pages are still writing as 2018.

The interesting work in 2026 isn't "which method wins." It's "which sequence of methods answers my decision fastest at the lowest cost." Synthetic panels slot in upstream, where the old list had nothing. The classical methods keep their downstream roles. Each one is still the right answer to the specific question it was built for.

I'm building will.it.sell to compress the pre-panel exploration step from weeks to minutes, for teams who were never going to buy a $30,000 focus-group project in the first place. I'm not selling a replacement for recruited panels or A/B tests. The decision matrix above is the one I'm using to plan my own research stack, and it's the one I'd hand a founder asking where to start.

Continue learning

Stop guessing. Start knowing.

Your first product validation is free. Get your report in minutes.

Test Your Product Idea Free