Opinion

Validate before you vibe code: product validation in the AI era

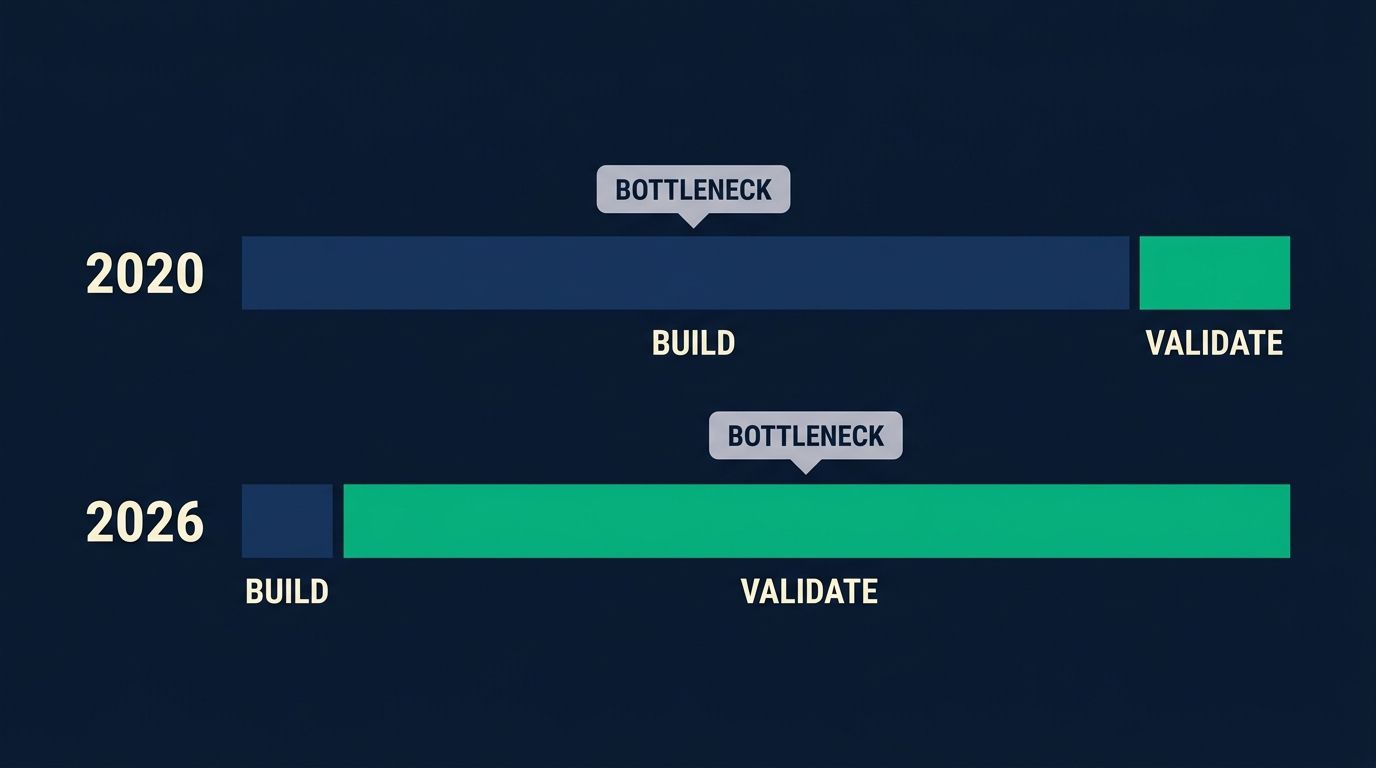

This week r/Entrepreneur had a 132-comment thread titled "How do people vibe code apps?". The same week, r/SideProject ran a 129-comment thread titled "Does anyone actually make money from building apps or is it all fantasy??". Same conversation. A year ago the hard question was "can I build this alone". Now it's trivial. Lovable went from $0 to $100M ARR in eight months (TechCrunch, 2025). 25% of Y Combinator's Winter 2025 batch shipped codebases that were 95% AI-generated (TechCrunch, 2025). The bottleneck moved. Builders who win in 2026 aren't the fastest shippers. They're the fastest validators, and most founders haven't updated their playbook yet.

If you want a broader map of the validation methods on the table today, start with our guide on 8 methods to validate a product idea.

Key Takeaways

- Building an app is now cheap. Validating demand still isn't.

- 43% of failed VC-backed startups died from poor product-market fit (CB Insights, 2024)

- "Can I build this" used to filter bad ideas. It doesn't anymore.

- The fastest validator wins, not the fastest builder.

- Pre-code validation measured in hours, not months, is the new competitive edge.

The bottleneck moved and nobody told founders

In 2020 you wrote 40,000 lines yourself or paid a contractor $40K. In March 2025, YC CEO Garry Tan reported that 25% of the Winter 2025 batch shipped with codebases that were 95% AI-generated (TechCrunch, 2025). The constraint that forced founders to think is gone, and nothing has replaced it.

Months of engineering work used to do two jobs at once. It shipped the product and it filtered ideas. You couldn't justify three months of coding without a real customer conversation first. The pain of building was doing the validation for you.

That pain is gone. Open Cursor, Lovable, or Bolt and you can ship something that looks production-ready before lunch. Lovable's own guide says a simple prototype takes "a few hours to 2 days" and an MVP with auth and a database takes "1-2 weeks" (Lovable, 2026). Y Combinator now describes its current batches as "the fastest-growing, most profitable in fund history because of AI" (CNBC, 2025).

Where does it show up? Product Hunt. New AI-built tools launch every day. Most are ghost towns by week two. Nobody writes the post-mortem, because the founder is already three prompts into the next one.

Observation: Talking to real users was always expensive. But for years, building was so much more expensive that nothing else registered. Now building costs an afternoon, and the cost of actually finding buyers, listening to them, and understanding what they'll pay for is what founders hit head-on. That cost didn't change. It just stopped being hidden.

Why vibe coding breaks the old validation playbook

The old playbook assumed engineering effort was the scarce resource. Validation got baked in by default. Today, 80% of developers use AI tools and trust in AI accuracy has dropped to 29% from 40% the year before (Stack Overflow 2025 Developer Survey, 2025). The speed is real. The judgment has to come from somewhere else now.

Here's the awkward part: 72% of professional developers say vibe coding isn't part of their paid work (Stack Overflow 2025 AI survey, 2025). People who build software for a living look at this wave and keep their distance. Why?

They see the failure mode from the inside. 66% of developers spend extra time debugging AI code that's "almost right but not quite" (Stack Overflow, 2025). That's the engineer's complaint. The founder's failure mode is worse. It's not "bad code", it's "beautifully built product nobody wanted".

Collins Dictionary named "vibe coding" its Word of the Year 2025 (Collins via Wikipedia, 2025). The word won because the behavior exploded. But it hides a split. Engineers see a code quality story. Founders live a demand story. Same tool, different problem.

Citation capsule: 80% of developers now use AI tools in their workflows, but only 29% trust AI accuracy, down from 40% a year earlier (Stack Overflow 2025 Developer Survey, 2025). Adoption is racing ahead of trust. For founders, that trust gap shows up as products that ship before anyone asked for them.

What are the two failure modes of vibe-coded apps?

Two things can go wrong. One is loud and one is quiet. The loud one gets all the coverage. The quiet one kills more startups. CB Insights analyzed 431 VC-backed shutdowns since 2023 and found that 43% died from poor product-market fit, while 70% ran out of capital (CB Insights, 2024). Capital runs out because the product didn't find a market, not the other way around.

Failure mode 1: the code is broken

This is the crowded conversation. Every engineering blog covers it. Technical debt, CVEs, brittle architecture, prompts that pass tests and fail in production. The critique is real and the engineers writing it aren't wrong.

It's just not the topic of this post. If your app crashes, you know. The feedback is instant, and your ops dashboard screams at you.

Failure mode 2: the product works, nobody wants it

This is the quiet failure. Not a single post-mortem on Hacker News. The landing page loads. The auth flow works. The Stripe webhook fires. The signups line is flat.

The math is ugly. If you can ship a finished-looking product in 48 hours, you can spend 48 months fooling yourself that the next feature will save it. Speed accelerates denial. When the build took months, the silence of zero sign-ups forced a reckoning. Now the silence is just Tuesday.

Observation: I'm shipping will.it.sell with AI tools and I've felt the exact moment this post is about. The demo worked, the database worked, the emails sent. And I still had to ask myself whether anyone had actually asked for it. That question doesn't get easier when the code gets faster.

If you want to pressure-test the demand side specifically, our guide on how to measure purchase intent breaks down five methods and their accuracy.

What does "validate before you build" actually mean in 2026?

The old definition was "talk to 30 customers before you hire your first engineer". The 2026 version is tighter. Answer four questions before you open Cursor. Among failed VC-backed startups, 43% cite poor product-market fit as the primary cause (CB Insights, 2024). None of those companies got blocked by engineering. They got blocked by a question they didn't ask.

Question 1. Is the problem real for someone other than me? Friends-and-family feedback is poison. Interest isn't intent. If the only people nodding are the ones who love you, you have zero data points.

Question 2. Would someone actually pay for this? Pre-orders, deposits, credit cards. "I'd use it" means nothing. Money on the table is the only honest signal you get.

Question 3. Who exactly is the target, and what makes them different? "B2C consumers" isn't a target. A 34-year-old in Austin with a dog and no time for meal prep is. If your audience fits on a business card, you haven't defined it. Our post on defining your target audience walks through the specifics.

Question 4. What would the first 100 buyers look like? If you can't picture them in detail, you don't have a product yet. You have a vibe.

None of this takes three months. It takes a long afternoon. And the same AI coding tools that made building cheap also made the cost of skipping these questions higher, because the code that fails on demand now ships in a weekend instead of a quarter.

A 72-hour pre-code validation checklist

Three days of deliberate work before the first prompt to Cursor. Not a month, not a week. Three days is enough to answer the four questions above and set a kill threshold. For context, Lovable raised $330M at a $6.6B valuation in December 2025 (TechCrunch, 2025). The tooling isn't your bottleneck. Your judgment is.

Day 1: write the positioning, not the app

One paragraph. Who is this for, what problem, what do they use today, why is the current option painful enough to switch. If you can't write that paragraph without hedging, the product doesn't exist yet.

Gut check: read the paragraph aloud to someone outside your family. If they say "who is it for again?", rewrite it before doing anything else.

Day 2: test the demand signal

Three signals, in order of cost and effort:

- AI consumer research first. Run a purchase intent test against a defined panel in minutes. It's the cheapest, fastest directional signal. If the synthetic panel can't find interest, you've likely mis-framed the product, the audience, or the price. Fix that before you spend on the other two.

- Five to ten problem interviews with strangers. Not friends. Cold outreach via LinkedIn, Reddit, or niche Discord communities. Ask about the last time the problem hit them, not about your solution.

- Landing page plus $50 of paid traffic. Google, Meta, or TikTok depending on the audience. Measure email captures, not clicks. Only run this if the first two signals pointed the same direction; otherwise you're paying to validate a hypothesis you haven't tightened yet.

Day 3: set a kill threshold before the data comes in

Write the go or no-go criteria on paper before you look at the data. Examples: "If the landing page converts under 2%, no build." "If 8 of 10 interviewees describe the problem unprompted, build."

Pre-commitment is how you beat motivated reasoning. Once the first signup comes in, your brain will find reasons to keep going. Decide now, not then.

Validation methods ranked by speed, cost, and signal quality

| Method | Time | Cost | Signal quality |

|---|---|---|---|

| Problem interviews (cold) | 5-10 days | $0-$50 | High |

| Landing page plus paid traffic | 3-7 days | $50-$500 | High |

| Pre-order or deposit page | 1-2 weeks | $0-$200 | Highest |

| AI consumer research panel | Minutes | $0-$50 | Medium-High (directional) |

| Friends and family survey | 1 day | $0 | Very Low (biased) |

| Build the MVP, see who shows up | 1-3 days | $20-$100 tool cost | Very Low (survivorship bias) |

For deeper coverage of how AI panels actually work, read the science behind synthetic consumer research. If you want to compare variations against the same audience, see A/B testing product variations on one consumer panel.

Where does AI consumer research fit in the stack?

AI consumer research isn't a replacement for real customers. It's a faster directional signal. CB Insights data shows 43% of failed startups cited poor product-market fit (CB Insights, 2024), and most of those founders skipped the cheap validation steps a synthetic panel can run in minutes. The point is to pressure-test positioning, audience, and price before you spend a dollar on ads or an hour on interviews.

Where it helps most:

- Which of three audience definitions reacts strongest?

- Which of four positioning angles reads as "for me"?

- Where does purchase intent collapse first: price, feature set, or positioning?

Where it doesn't replace human work:

- Qualitative depth interviews that surface problems you didn't know existed.

- Live pre-orders with real payment methods.

Observation: A directional signal in minutes doesn't replace customer interviews. It tells you which interviews are worth doing. That's the whole pitch. You still need humans, you just don't need them before you know which questions to ask.

For the evidence base on how close AI consumer panels get to real human responses, see our deep dive on validating AI consumer research against real studies.

Frequently asked questions

Is vibe coding bad for startups?

No, but it's misread. Vibe coding is a build accelerator, not a business accelerator. 80% of developers use AI tools (Stack Overflow, 2025), and the tools work. The risk is using all that speed to skip the validation step that used to be forced by the slow build.

Do I still need to talk to customers if I can build an MVP in a day?

Yes, more than ever. 43% of failed VC-backed startups died from poor product-market fit (CB Insights, 2024). AI coding tools removed the cost of shipping, but they didn't remove the cost of shipping the wrong thing. Customer conversations are the one step the tools can't do for you.

What is the cheapest way to validate a product idea before writing code?

A landing page plus $50 of paid traffic gives a real demand signal in under a week. Problem interviews with cold strangers cost $0 and pressure-test the problem itself. AI consumer panels return a directional purchase intent score in minutes. Among 431 VC-backed shutdowns, 43% cited poor PMF (CB Insights, 2024). All three steps cost less than one failed launch.

How do I know if my AI-built app is failing because of the idea or the build?

Check the funnel. If signups are flat, the idea is the suspect, not the code. 66% of developers spend extra time debugging AI code that's "almost right but not quite" (Stack Overflow, 2025), so broken code usually screams at you. Silence on the signup line is a demand signal, not a bug.

Can AI consumer research replace real customer interviews?

No. It's a directional layer, not a replacement. Synthetic panels are fast enough to test five audiences and three price points before lunch, which narrows the field. Real interviews surface problems you didn't know existed. 43% of failed startups missed PMF (CB Insights, 2024), and closing that gap takes both signals, not one.

The frame shift, and what comes next

Building used to be expensive, so validation was a luxury. Building is cheap now, so validation is the whole game. 25% of YC Winter 2025 startups shipped 95% AI-generated code (TechCrunch, 2025). Lovable hit $100M ARR in eight months (TechCrunch, 2025). The tooling gap is closed. The judgment gap is wide open.

Builders who ship faster than they validate are competing in the wrong race. The bottleneck moved from "can I build this" to "should I build this", and the founders who win in 2026 are the ones who noticed in time.

I'm building will.it.sell because I watched this pattern repeat. Pre-revenue beta, looking for testers. Register for a free account, send me a message, and I'll hand you free credits to run your first purchase intent test before you open Cursor.

Stop guessing. Start knowing.

Your first product validation is free. Get your report in minutes.

Test Your Product Idea Free